Garbage in. Garbage out.

AI didn't change that. It multiplied it.

Same model. Same capabilities. Completely different results.

Why? Because AI doesn't "understand." It predicts — based on the context you give it.

It's not a model problem. It's a context problem.

Most teams think bad AI output means the model failed. It didn't. Every large language model generates text by predicting the next token based on what's already in its context window. The attention mechanism inside the model assigns weights to each token by relevance, and those weights determine what the output reflects. What reaches the context window determines what gets attended to. What gets attended to determines what comes out.

That is not a metaphor. It is the mechanism.

Give the model vague input and it doesn't stop. It fills in the gaps with assumptions pulled from patterns in its training data, and it keeps going. The output is fluent. It is confident. It is also, often, wrong. That is where "confidently wrong" comes from: the model filling in missing context with plausible-looking inventions, then sounding sure about them.

Give the same model structured context — clear requirements, explicit constraints, precise definitions, examples of what correct looks like — and the attention mechanism concentrates. The output sharpens. Same AI. Different discipline. Different outcome.

This isn't about better prompts. It's about better inputs.

The word "prompt" is part of the problem. It implies a single request into a chat window. A prompt is one sentence. An agent's context is everything the model sees at the moment of generation.

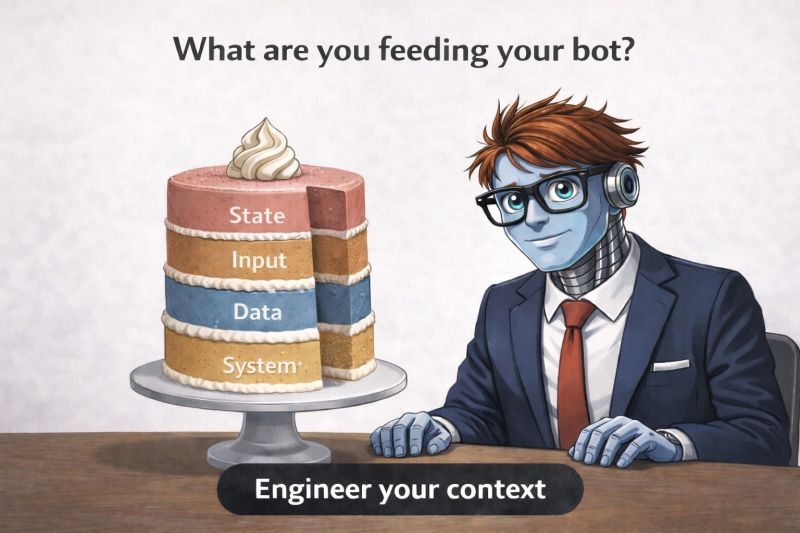

Context is layered:

- System instructions — the durable framing and rules that govern how the agent should behave.

- Data — documents, source code, prior outputs, relevant files retrieved from the codebase or a knowledge base.

- User input — the current request, the current task, the question being asked right now.

- State — accumulated results from prior steps in the workflow: what was decided, what was produced, what happened.

Layer by layer. If those layers are vague, incomplete, or misaligned, the output won't just be wrong — it will be confidently wrong. If those layers are structured and intentional, the model locks in, and the output sharpens.

Most teams are still treating AI like a search box — typing requests and hoping for good results. The teams pulling ahead are doing something different: they're engineering the context. They decide what the model sees, how it's structured, and what "correct" looks like before generation starts.

State is context

There is one layer of the context stack that most organizations underinvest in — and it is the layer that separates one-shot demos from production systems.

When a coding agent runs a real task, it rarely finishes in a single step. It reads a spec, generates code, runs tests, reads the output, revises, and repeats. Each step produces data the later steps need. The accumulated result — which files were written, which tests passed, which validations fired, which decisions were made — becomes the context for the next step.

In other words, the context at step seven is everything the workflow has done by step six. If that state is well-structured, correctly typed, and carries a provenance record of who produced what and when, the agent at step seven has a precise picture of the task so far. If state is a pile of untyped globals, temp files, and mystery strings, the context is degraded before the next step starts — and the failure surfaces three steps downstream with an unrelated error message.

This is why the harness treats state as a first-class artifact. Typed accessors catch type mismatches the moment they happen, not five steps later. Versioned state lets you query what a field contained at any point in the workflow. A mutation log records every change — which field, what value, which step, what time. Provenance is not compliance theater; it is the debugging story for agent workflows, and it is what makes the context reliable as the work compounds.

Good state management is good context management. The two are the same discipline at different timescales.

Prompts vs. assembled context

Here is the operational shift most teams haven't made yet:

| They're doing | The teams pulling ahead are doing |

|---|---|

| Writing prompts | Assembling context |

| Optimizing wording | Structuring inputs |

| Hoping for good output | Validating against contracts |

| Treating state as session noise | Treating state as a versioned asset |

| Fixing bad output by re-prompting | Fixing bad output by improving context |

The teams in the right column ship systems. The teams in the left column ship demos.

What to do this week

Audit your most-used agent workflow for its context stack. What system instructions govern it? What data does it retrieve? What user input triggers it? What state does it accumulate? If any layer is missing or ad-hoc, that is where your "confidently wrong" output is coming from.

Replace one untyped field in that workflow with a typed accessor. If a step reads a variable expecting a string and gets a number, the failure should happen at the read with a clear error — not three steps later with a mystery crash.

Start a mutation log for the next iteration. Every change to workflow state: which field, what value, what step, what time. When the output is wrong, you'll trace it in minutes instead of re-running the workflow to reproduce.

Stop rewarding clever prompts. Start rewarding well-engineered context. Promotion criteria, code review, engineering demos — all of it. What you measure is what you get.

The shift, one more time

This isn't about better prompts. It's about better inputs.

Same AI. Different inputs. Different outcomes.

Engineer your context.