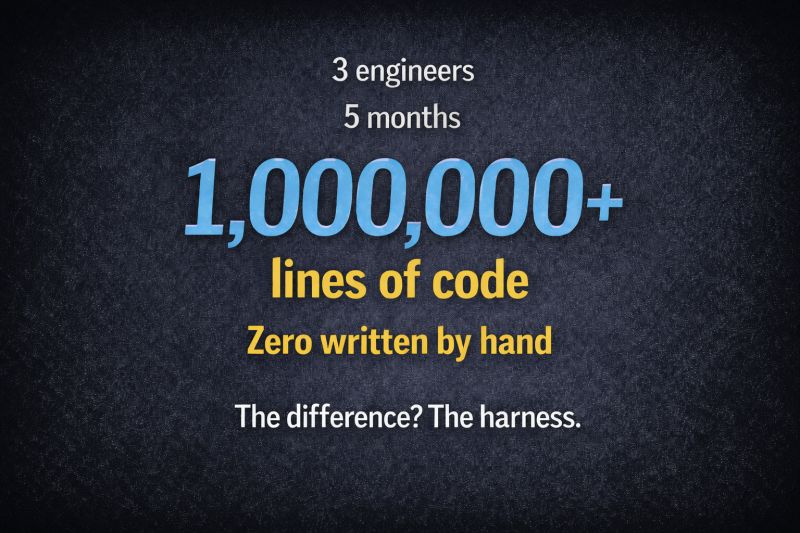

3 engineers.

5 months.

1,000,000+ lines of production code.

Zero written by hand.

Not a demo. Not a prototype. Production systems.

What most people miss

They didn't just "use AI." They built a harness — the infrastructure that makes AI output reliable.

- Specifications that defined exactly what to build.

- Validation pipelines that caught errors automatically.

- Workflows that enforced quality at every step.

- Architectural constraints encoded as rules.

Their job wasn't writing code. It was engineering the system that writes code.

And when they scaled from 3 engineers to 7, throughput increased. That breaks 50 years of software engineering assumptions.

The case study, in more detail

The team in question is OpenAI's Codex group, described in a February 2026 engineering retrospective. Three engineers began the project; seven finished it. Five months of elapsed time. Roughly one million lines of production code. None of it hand-typed.

The throughput curve is the part that should stop any engineering leader cold. Brooks' Law, named for Fred Brooks' 1975 observation that "adding manpower to a late software project makes it later," has been the gravitational constant of software engineering for half a century. Communication overhead grows quadratically with team size. Coordination cost eats the gains from additional headcount. Every engineering leader has felt it.

OpenAI's team inverted it. Adding engineers did not slow them down because the coordination cost no longer sat between people. It sat in the harness. New engineers did not have to absorb tribal knowledge, interpret ambiguous tickets, or synchronize their mental models with four existing peers. They read the specifications, contributed to the validation pipelines, and started producing. The harness was the shared context.

That is not a productivity story. It is a structural story about where coordination cost is paid — and what happens when it moves from human-to-human communication into engineered artifacts.

What the harness actually is

A harness is not a tool you buy. It is infrastructure you build, from a small set of primitives that any capable coding agent exposes.

Specifications as the source of truth. Every component the agent builds has a Markdown spec in version control: input contract, output contract, acceptance criteria, edge cases. When the agent's output is wrong, you fix the spec and regenerate. You do not hand-edit the generated code. The spec is the artifact; the code is derivative.

Automated validation as the gate. Between agent output and production sits a pipeline of deterministic tools — test suites, type checkers, linters, security scanners, coverage analyzers, performance profilers. They return pass or fail, not opinions. A guard condition gates deployment: if any check rejects the diff, the implementation is rejected and the spec is revised. The spec defines intent. The validation tools enforce it.

Workflows as the coordination layer. Real tasks are multi-step. Real systems are multi-component. A workflow engine decomposes work into a directed graph: fan-out nodes that run parallel agents, reducers that merge their results, conditional branches that route on outcomes, correlation IDs that trace every decision end-to-end. The workflow graph is your org chart, compressed into a single person's operating model.

Architectural constraints as enforced rules. The shape of the codebase is not left up to the agent. Naming conventions, layering rules, dependency directions, forbidden imports, security boundaries — all of it expressed as automated checks. The rules run on every diff, not just on pull-request day. The agent cannot drift the architecture because the architecture is enforced by tooling, not by reviewer memory.

These are not exotic capabilities. They are the primitives every modern coding agent already exposes: persistent memory that retains context across sessions, file-system access that lets agents read the codebase directly, typed tool calls that route side effects through controllable interfaces, subagent delegation that parallelizes independent work inside a ReAct loop. What the OpenAI team did was assemble those primitives into discipline.

What your engineers actually do in a harnessed organization

If the agent writes the code, the natural question — the one the LinkedIn post ends on — is what the engineers do.

The answer is not "less work." It is categorically different work.

- They author specifications. Testable, versioned, precise. The specification is the handoff; they own that handoff.

- They design validation pipelines. What invariants must hold? Which checks catch which classes of failure? What is the gate-fail policy?

- They build and maintain the harness itself. The workflow graphs, the architectural rules, the context assembly logic. The tool that makes the factory.

- They do human-in-the-loop review at destructive boundaries. Production deploys. Schema changes. Anything irreversible. Not line-by-line reading of every diff — reviewing the boundaries where the validation pipeline intentionally stops and escalates.

- They design for the novel. The places where the spec can't be written up-front yet — new algorithms, research-grade optimizations, unfamiliar domains — are where exploratory prototyping still happens. The harness handles the known patterns; engineers focus on the edges.

That is a senior technical role. It demands more rigor, not less — requirements engineering, systems design, validation architecture, and security boundaries all compressed into the same job description. The people who can do it are the ones who will staff the next generation of shipping organizations.

What to do this quarter

Pick one output of your team — a feature, a service, a pipeline — and audit it against the four harness components. Is there a spec? Is there automated validation? Is there a workflow graph? Are the architectural constraints machine-enforced? For each "no," you have a concrete place to invest.

Invert the investment ratio. Most organizations are spending on tools (model subscriptions, copilots, IDE plugins) and near-nothing on harness. Flip it. The tools are a commodity; the harness is the moat.

Write a job description for a harness engineer and hire one. Not "AI-assisted developer." Not "prompt engineer." The person who owns the specification patterns, the validation pipelines, the workflow topology, and the architectural rulebook. This is the role that compounds.

Measure systems, not experiments. Defect rate on AI-generated diffs. Time from spec to deploy. Number of specifications under version control. Iterations per spec before acceptance. These are the metrics of a compounding system.

The difference, in one line

Most organizations are investing in AI tools. The ones pulling ahead are investing in the harness.

That's the difference between:

- Experiments that stall

- Systems that compound

If AI wrote your code tomorrow, what would your engineers actually do?