The goal isn't for coding agents to write the code faster.

The goal is to give them better direction.

The teams pulling ahead aren't using AI as a tool. They're using it as a system.

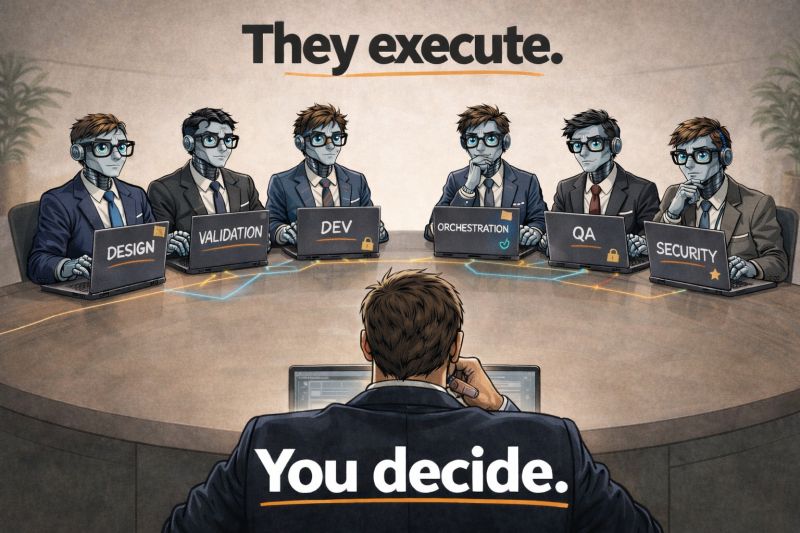

Each agent has a role. Each step has a contract. Each output is validated.

The work gets done. They execute. You decide.

"A system, not a tool" is a load-bearing sentence

Most organizations have landed on AI the way they land on any new tool: pilot it, train a few people, watch the demos, decide it's promising. A coding agent becomes one more thing on the stack — next to the IDE, next to the CI pipeline, next to the feature flag service.

That framing is why the gains stay small. A tool is something a person picks up to do a task. A system is something the organization operates, with defined roles, defined interfaces, and a protocol for how work moves through it. The first gives you a productivity bump for the person holding the tool. The second changes how work gets done.

The distinction is the entire game.

Each agent has a role

Start with the word role. In a tool view of AI, there is no such thing — the agent is a generic helper that does whatever the prompt asks for. In a system view, each agent is registered with a capability set: code review, test generation, database migrations, security scanning, infrastructure provisioning, whatever the work requires. The orchestrator queries an agent registry to find agents that match the task. If no agent has the capability, the delegation fails immediately, not three steps later with an unrelated error.

This looks simple in isolation and it is decisive in the aggregate. A registry turns "which agent should I ask?" from a judgment call into a lookup. It also turns "is the agent still alive?" from a hope into a heartbeat. Production multi-agent systems — the ones that actually ship rather than demo — run this pattern at the boundary of every delegation, because a registry that doesn't reflect reality is worse than no registry at all. An agent marked available that crashed five minutes ago is a landmine.

The role, in other words, is not a slide in a deck. It is a structured entry in a system the orchestrator queries on every task.

Each step has a contract

Now the word contract. When a specification-grade role passes work to another role, it does not pass a paragraph of natural language and hope for the best. It passes a typed message with explicit fields: a unique ID, a topic, a sender, a correlation ID that links related messages across the workflow, and a payload validated against a schema registered for that topic.

If the payload is missing a required field or the types are wrong, the message is rejected at the boundary. The receiving agent never sees malformed data. The failure is loud, early, and traceable to the sender — not silent, late, and blamed on whoever was nearest when the crash happened.

The shift here is not pedantic. Anthropic's multi-agent research found that structured handoffs between agents produced a 90% performance uplift over a single agent running the same breadth-first task — but only when the handoffs were typed. When the same team tried unstructured natural-language handoffs, the coordination quality collapsed. Structure was not overhead. Structure was the reason it worked.

Every step having a contract also means every step is debuggable. Typed messages have IDs, timestamps, and correlation IDs. When something goes wrong in a multi-agent workflow, you trace the exact sequence of messages that led to the failure. Try doing that with unstructured text passed between chat windows. You can't. The difference is the difference between engineering and storytelling.

Each output is validated

The third word is validated. Before any agent's output crosses a boundary — into production, into another agent's context, into a decision that affects a human — an automated pipeline runs deterministic checks: tests, type checks, linters, security scans, performance profiles, coverage analysis. Pass or fail. Not opinions.

In a tool view of AI, validation is a human reviewer eyeballing a diff. In a system view, validation is a pipeline that runs on every output, every time, with a gate condition: if any check rejects, the implementation is rejected and the step retries against a revised specification. Humans gate the escalations — destructive boundaries, production deploys, schema changes, anything irreversible. The pipeline handles everything else.

This is how probabilistic generation becomes deterministic behavior. The model can be creative. The validator is not. The creativity is caged by a contract the code must satisfy before it ships.

They execute. You decide.

Put the three concepts together and you have the operating model the sentence is pointing at.

- Roles make work assignable — a registry answers "who can do X?" in one query.

- Contracts make handoffs reliable — typed messages carry what the next step needs, validated before delivery.

- Validation makes outputs gateable — automated checks decide pass or fail before anything ships.

The person in the center of this system is not typing faster. They are doing what a CEO does inside a company: defining outcomes, delegating, verifying. The coding agents are the executors. The human decides what "done" means, which validations gate which steps, and where the escalation boundaries sit.

That is the shift. Not "AI writes code faster." The organization writes software differently.

What to do this week

- Name the roles in your most-used AI-assisted workflow. If every task goes to "the AI" rather than to a role with declared capabilities, you have a tool, not a system. The fix is to decompose: code review, test generation, spec authoring, security scan — each with a capability label the orchestrator can look up.

- Turn one handoff into a contract. Pick one step where work passes between humans (or between a human and the agent) via Slack messages, tickets, or ad-hoc paragraphs. Replace it with a typed payload: required fields, validated schema, correlation ID. Watch how much downstream rework disappears.

- Put a validation gate on one output. Not a review meeting. A pipeline. Pass/fail before the output crosses the next boundary. Measure the defect rate before and after. That is your operating-model-change metric.

The shift, one more time

Each agent has a role. Each step has a contract. Each output is validated.

The work gets done. They execute. You decide.

AI doesn't change software economics by writing code faster. It changes when leadership changes the operating model.