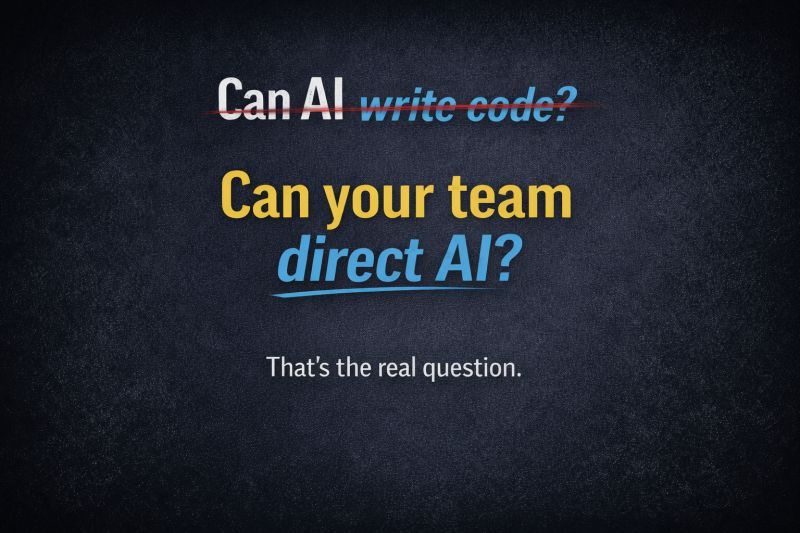

The question has changed.

It's no longer whether AI can write code.

It can. That's settled.

The real question is: can your team direct it?

The question most organizations are still asking

"Can AI write code?" was a 2024 question. The answer came in, and it was yes. By now the demos have shipped, the pilots have happened, and every engineering leader has seen a coding agent produce a working feature in an afternoon.

So the 2024 question is settled — and the teams still asking it are two years behind. The operative question is structural, not technical: can your organization consistently get correct, shippable output from these systems on a Tuesday morning, on the thing you actually care about, with the people you actually have?

If the honest answer is "sometimes, depending on who's driving," you do not have an AI strategy. You have an experiment.

What "direct it" actually means

Directing a coding agent is not prompting it. It is engineering the context around it.

Every large language model generates by predicting the next token based on what's in its context window. The transformer's attention mechanism weights the tokens that matter most for the current prediction. What reaches the context window determines what gets attended to, and what gets attended to determines what comes out. That is not a metaphor. It is the mechanism.

A chat interface fills that context window with a conversational sentence and a few system instructions — a small fraction of what the window can hold, and almost never the most useful information for the task. A coding agent operating inside a harness fills the context window deliberately: specifications, the relevant source files, type definitions, test cases, architectural constraints, prior decisions. Same model. Same week. The output quality is not slightly different. It is categorically different.

That is what "directing" means. You are not coaxing a chatbot. You are assembling the structured context that makes the model attend to the right things, the contract the output must satisfy, and the automated validation that decides whether the output is acceptable before it reaches production.

Vibe coding vs. reliable systems

This is the operating gap.

Vibe coding. One engineer, one chat window, one prompt at a time. Context is whatever the human remembered to paste in. Acceptance is the human eyeballing the diff. Reproducibility is whether the same person can get lucky again next week. Good output is a story about an individual, not a property of the system.

Reliable systems. Specifications in version control. Typed contracts that define what "done" means for every component. Automated validation — tests, type checks, linters, security scans, coverage analysis — running in parallel on every diff, gating every merge. Orchestration that routes work to the right agent, tracks every task, and recovers when something fails. Good output is a property of the system. The individual matters, but the system does not depend on heroics.

The difference is not polish. It is reproducibility. "Might" is not engineering. Repeatable results are.

Signs your organization is still vibe-coding

- Every good result has a specific person's name attached to it. The same work with a different person goes sideways.

- Reviewers read generated diffs line-by-line because there is no automated pipeline they trust to do it for them.

- Nobody can answer "how many AI-generated diffs went to production last month, and what was the defect rate?" with a number.

- The specification for any given piece of work is a Slack message or a paragraph in a ticket, not a versioned artifact.

- Your response to "the output was wrong" is to prompt the model differently, not to fix the specification and regenerate.

If three of those describe your team, the question to ask is not "which model should we use?" It is "what operating model are we asking these tools to live inside?"

What to do this quarter

Name one flow where you need reliability. Not the most exciting one — the most reproducible-critical one. Onboarding, feature delivery, code review, incident response. Something that has to work the same way on Tuesday as it did on Friday.

Write the specification before the prompt. Input contract, output contract, acceptance criteria, examples, edge cases. The spec is the direction you're giving; the prompt is just the vehicle.

Put automated validation between the agent and production. If a human has to eyeball every diff before it lands, you have not industrialized anything. You have just added a new source of diffs for that human to read.

Measure iterations-per-specification. How many times does a spec get revised before its output passes? That number is a management metric that tells you whether your specifications or your validation are the weakest link — and it beats "how's the AI rollout going?" every time.

The shift, not the demo

This is no longer a tooling decision. It's an operating model decision.

The teams pulling ahead aren't using better models. They've built systems — specifications, validation, orchestration — that make AI output reliable and repeatable.

"Might" is not engineering. Repeatable results are.

Has your organization made the shift — or are you still asking the 2024 version?