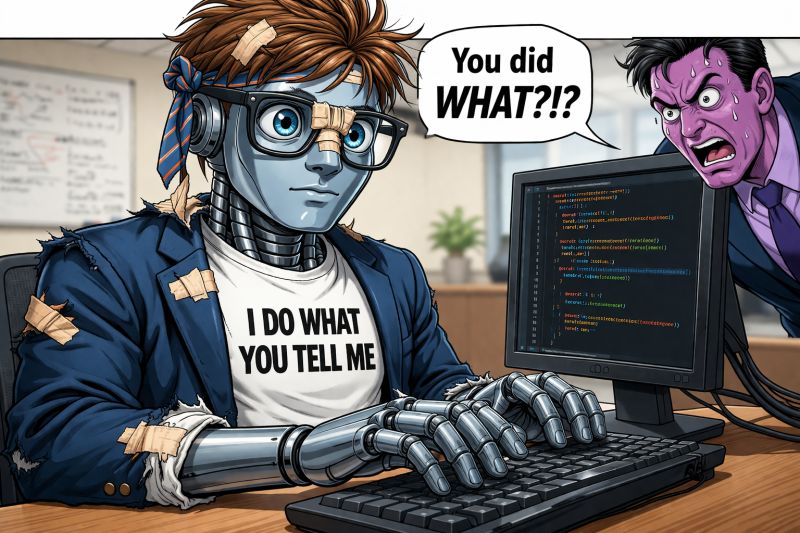

There's a psychological trap in working with coding agents: we tend to anthropomorphize them.

When something goes wrong, it can feel like they weren't paying attention. Misunderstood something obvious. Got lazy. Got careless.

That trap is dangerous. No amount of venting changes how coding agents operate.

A coding agent is not a junior developer

A coding agent is not a person. It is a system functioning against the context you provided. Every "mistake" usually stems from one of three things:

- Missing context

- Ambiguous instructions

- Conflicting signals

That is not a failure of effort. It is a failure of specification.

This is why frustration never helps. The agent is not slacking off. It is computing a probability distribution over next tokens, weighted by the attention mechanism acting on whatever reached its context window. Give it incomplete context, it generates plausible output. Give it ambiguous context, it resolves the ambiguity the way the training distribution suggested — which may not be the way you intended. Give it conflicting context, it picks one signal and ignores the other, and the tie-breaking rule is not the rule you would have chosen.

None of that is a character defect. It is a mechanism. Once you see it as a mechanism, the impulse to argue with the model disappears. You argue with the context instead — which, helpfully, is something you can actually change.

The shift from prompts to environment

OpenAI demonstrated this at scale: a team of three engineers generating roughly a million lines of production code with coding agents in five months. Their success didn't come from clever prompting. It came from the harness they built around the agent.

That is the real shift. It is not prompt engineering. It is environment design.

Prompt engineering treats the agent like a person you have to coax. Environment design treats the agent like a component in a system whose inputs you are responsible for. The first asks, "how do I phrase this better?" The second asks, "what does the agent need to see, in what order, with what constraints, to make the correct answer the path of least resistance?"

The teams that crossed from pilots to production made exactly this shift. Every team still grinding prompt variations is asking a 2024 question in 2026.

The four primitives of environment design

In practice, environment design reduces to four primitives. Each of them is something you build, not something you write once.

Memory. Persistent context the agent loads automatically at the start of every task. Project conventions. Architectural decisions. The pattern your team uses for error handling. The reason you chose Vitest over Jest. Memory is the difference between explaining yourself again every session and having the agent start already fluent in how your codebase actually works. Without memory, every session is a cold start, and cold starts are where "confidently wrong" output comes from. With memory, the agent's first token is already aimed at your team's answer, not at the training distribution's average answer.

Skills. Reusable patterns of reasoning, packaged as named capabilities. A commit skill. A code-review skill. A migration-generator skill. Each is a small Markdown file with a trigger condition and a set of instructions. The agent loads the skill on demand, executes its pattern, and moves on. Skills turn every workflow you used to re-explain into a callable primitive. The win is compounding: every skill you write is a piece of friction that never slows the team down again.

Workflows. Plan → critique → implement → test. Or discover → specify → generate → validate. Or whichever decomposition your work actually requires. A workflow is a directed graph of steps, each with inputs, outputs, and validation. It does not run in a single prompt. It runs across a sequence of agent actions, with state passed between them. Without workflows, multi-step work is a single long conversation that degrades as context fills. With workflows, each step has its own clean context and its own gate.

Agents. Roles defined by constraints, not personalities. An agent is not a "helpful assistant." It is a declared role with a capability list, a permission envelope, and a contract for the handoffs it participates in. "Code review agent" is a role with a clear input (a diff), a clear output (pass/fail with issue list), and boundaries on what it can touch. The moment you start defining roles instead of personas, the orchestrator knows where to route work and the agent knows what "correct" looks like for its scope.

These four primitives compose. Memory feeds agents. Skills are invoked inside workflows. Workflows orchestrate agents. The whole thing is engineered, not coaxed.

The diagnostic reframe

Here is the single most useful change you can make to your team's debugging culture this month.

When an agent produces wrong output, replace this question:

"Why did it do that?"

With this one:

"What in the context made that the most likely outcome?"

The first question is about the agent. It has no useful answer, because the agent is a probability distribution, not a deliberating mind. The second is about the input. It has a concrete answer every time — the output reflects what the attention mechanism weighted most heavily, and what it weighted most heavily was something in the context window. A missing requirement. An ambiguous phrase. A conflict between a stated constraint and an in-memory convention. An example that pointed the wrong direction.

Find what in the context produced the wrong weight, fix that, and regenerate. The loop collapses. The frustration evaporates. You are no longer arguing with the model; you are editing the specification the model operated on.

Reacting vs. engineering

The distinction between reacting and engineering is the entire shift this playbook is built on.

Reacting is the old loop. The output is wrong. You rephrase the prompt. The output is still wrong. You rephrase again. Eventually you get something acceptable, you ship it, and next week the exact same problem reappears because nothing about the environment changed. You did not solve the problem. You worked around it, in one conversation, at one moment, with one person who happened to find a phrasing that worked.

Engineering is the new loop. The output is wrong. You classify the failure (missing context, ambiguity, conflict). You update the environment — the memory file, the skill definition, the workflow's validation gate, the agent's scope — so that the next run, with the next person, under the next variation of the same task, produces the right output by construction. The effort goes into the environment once and pays every subsequent invocation forever.

The irony that surprises most leaders: the more you treat the agent as a system rather than a person, the more "intelligent" its output becomes. Not because the model changed. Because the environment changed, and the environment is what determined the output in the first place.

What to do this week

- Ban "why did it do that?" in retros and standups. Replace it, every single time, with "what in the context made that the most likely outcome?" The conversation will change within a week. What was blame becomes diagnosis.

- Turn one repeated explanation into a skill. If someone on your team has explained the commit format, the code-review protocol, or the test-naming convention more than twice this month, that is a skill waiting to be written. Markdown file. Trigger. Instructions. Commit it.

- Audit your most-used agent workflow for the four primitives. Memory — does it load automatically? Skills — are the repeated patterns named and callable? Workflows — is the work decomposed into gated steps, or is it one long prompt? Agents — are the roles constrained, or is everything going to "the AI"? Each missing primitive is a concrete build task, not a vague aspiration.

- Stop rewarding clever prompts. Start rewarding environment investments. What you measure is what you get. If a senior engineer saves the team a day by updating the memory file, that is the work to celebrate. Not the clever one-shot that got lucky.

The shift, one more time

A coding agent is not a person. It is a system functioning against the context you provided.

Reacting is asking the agent to change. Engineering is changing what the agent sees.

The more you treat the agent as a system rather than a person, the more intelligent it becomes.

That is not prompt engineering. That is environment design — and that is the discipline that compounds.